- Newest

- Most votes

- Most comments

I would like to suggest some changes to resolve this issue: -

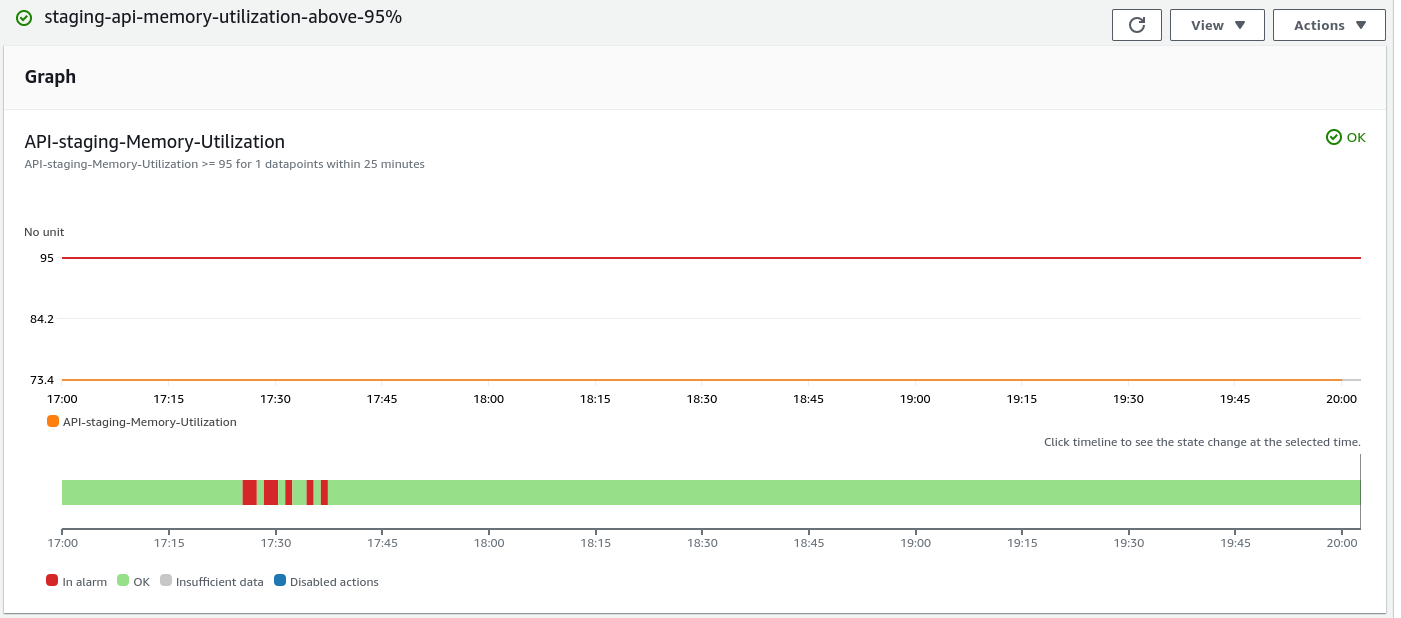

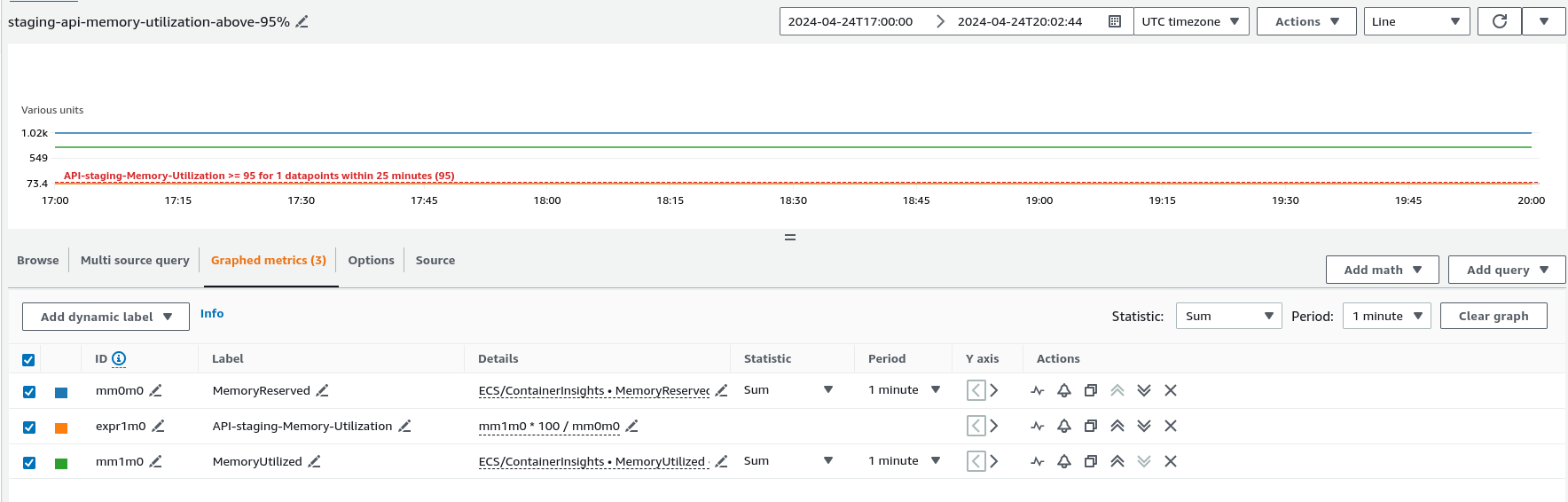

1.Verify Alert Configuration: Check that the alert threshold is correctly set at 95% and targets the right ECS containers.

2.Confirm Metric Accuracy: Double-check the metric data in CloudWatch Metrics to ensure it accurately reflects memory usage.

3.Review ECS Setup: Check ECS container configurations and investigate for any memory-intensive tasks or issues.

4.Monitor Alarm State Changes: Look into CloudWatch Alarm History for patterns in alarm triggers.

5.Adjust Alert Actions: Review and adjust alert actions if necessary, ensuring they are appropriate.

go through with documents: - https://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/AlarmThatSendsEmail.html#common-features-of-alarms

Hello.

What is the setting for CloudWatch Alarm's treat missing data?

Depending on the contents of this setting, an alarm may occur even if the metrics appear normal.

https://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/AlarmThatSendsEmail.html#alarms-and-missing-data

You may also be able to check the reason for the alarm by looking at the CloudWatch Alarm history.

https://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/AlarmThatSendsEmail.html#common-features-of-alarms

It is set to treat missing data as missing, but we are evaluating percentiles with low samples. So I will take a look at that setting

Relevant content

- asked 5 months ago

- Accepted Answerasked 3 months ago

- asked 4 months ago

- asked a year ago

AWS OFFICIALUpdated 2 years ago

AWS OFFICIALUpdated 2 years ago AWS OFFICIALUpdated 2 years ago

AWS OFFICIALUpdated 2 years ago- How can I monitor daily EstimatedCharges and trigger a CloudWatch alarm based on my usage threshold?

AWS OFFICIALUpdated 2 years ago

AWS OFFICIALUpdated 2 years ago  AWS OFFICIALUpdated a year ago

AWS OFFICIALUpdated a year ago

I double and triple-checked the whole setup and all looks correct. We have the same setup for a list of other ECS instances and we have this problem only for this ECS. It might have been temporary, because it hasn't happen since.